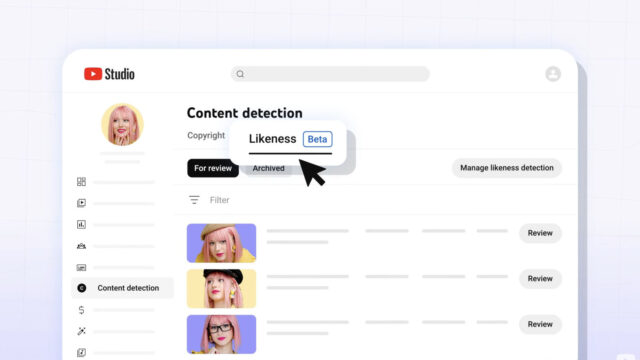

YouTube likeness detection is moving from a narrow creator-safety experiment into something many more adult creators can use.

The tool sits inside YouTube Studio’s content detection area. It is meant to help creators find videos that appear to use their face or likeness without permission, especially as AI-generated deepfakes become easier to make and harder to spot at a glance.

A practical response to AI copies

YouTube already gives creators copyright-detection tools. Likeness is a different problem. A fake video can dodge normal copyright filters while still borrowing a creator’s identity, face or public image.

That is why the rollout matters. Creators are now dealing with AI clips that can confuse fans, damage reputations or make scams look more believable. A detection page does not make the risk disappear, but it gives creators a place to review matches and decide what to do next.

The feature also arrives while other platforms are trying to label or limit synthetic media. We have seen similar pressure around AI safety features, including OpenAI’s Trusted Contact feature for ChatGPT. The common thread is simple: AI tools are moving faster than the old reporting systems were built for.

Still not a magic shield

Detection tools can miss things. They can also flag clips that need human review. YouTube will still have to prove that the system catches enough harmful copies without drowning creators in false alarms.

Even so, this is the right direction. If platforms profit from creator identity, they also need better ways to help those creators defend it.

The bigger challenge is speed. A creator may need to respond quickly if an AI copy starts spreading, especially if it is attached to a scam, political message or explicit content. YouTube’s tool will be more useful if review, removal and appeals are easy to understand from inside Studio.